D3 ENGINE

Collaborative Approach

Innoplexus team works together with its customers to ensure optimal integration with customer processes. Before starting any new project, a scoping workshop is held with the customer to outline their major requirements and define the exact project deliverables. As a next step, a proposal document containing the project background/context, approach, deliverables, project governance, timeline and cost is submitted for customer review and approval. Upon proposal acceptance by the customer, a Master Service Agreement (MSA) and/or a Statement of Work (SoW) document is signed between the parties. As soon as the Project Order (PO) is received by Innoplexus, the project kick-off and project delivery is planned and executed as agreed.

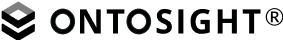

Iterative way of working

Innoplexus teams follow the agile methodology. As a first step, a minimal viable product is delivered, which is incrementally built-up, based on advanced requirements and customer feedback incorporation at regular intervals as part of milestone/project delivery meetings, thereby, improving the desired solution/end-product.

Frequent Touchpoints

Innoplexus and customer teams connect regularly to ensure alignment on goals and monitor project progress. This is ensured with the help of bi-weekly meetings for regular projects (4-6 weeks duration) and in addition, monthly steering committee meetings for bigger, complex projects (26-30 weeks duration).

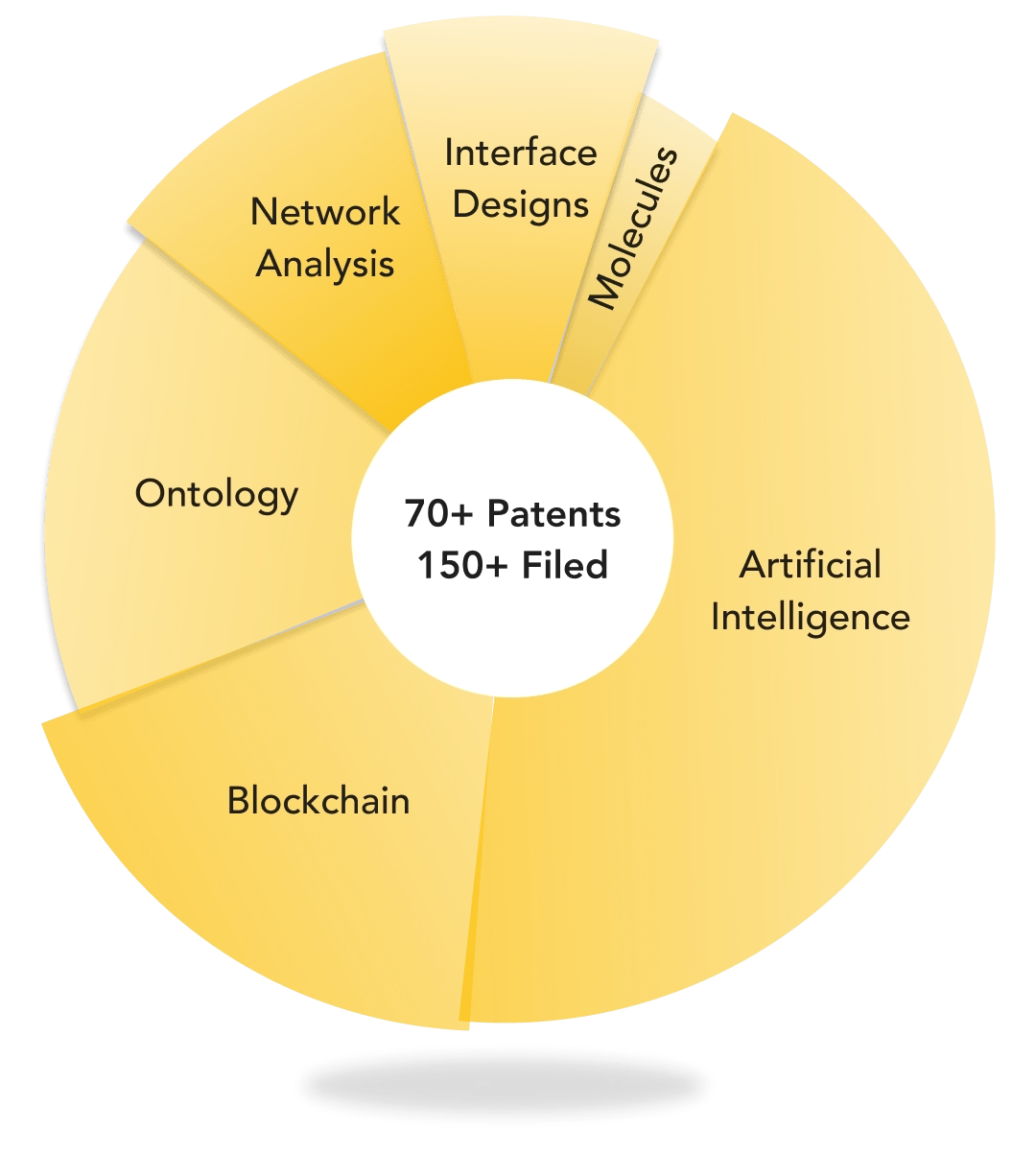

Fully automated with AI algorithms and verified monthly

Largely automated with AI algorithms and sanity tested by experts

Industry standard functional testing as per SDLC process

Largely manually validated by medical experts under the guidance of CMO/CSO

![]()

Innoplexus wins Horizon Interactive Gold Award for Curia App

Read More